AHSYNC BYTES - Weekly Digest (30th Mar, 2026)

From Google's chatbot switching tools to Wikipedia's AI crackdown — plus Angular deep dives and LLM hallucination fixes. Everything shaping the dev world this week, packed into one sharp read.

Hey builders 👋

The IT world moves fast and we’re here to make sure you don’t fall behind the race. Welcome to this week’s digest, your go-to roundup of all the buzz in tech. We deliver you the highlights that count, from the latest developments in frontend development to significant advances in AI and expert tips on Angular. Quick, relevant, and right on time.

🤖 AI & Machine Learning

You can Now Transfer your Chats and Personal Information from Other Chatbots Directly into Gemini

When it comes to AI chatbots, there’s currently a war on for consumer attention. All the big chatbot providers are looking to increase their user count and, in a minor coup for itself, Google just made it significantly easier for users of those other chatbots to defect to Gemini.

On Thursday, the company announced what it calls “switching tools,” new widgets that are designed to allow users to transfer “memories” (basically chunks of personal information) and even entire chat histories from other chatbots directly into Gemini. Users can easily share “key preferences, relationships, and personal context” in this way, the company says.

Wikipedia Cracks Down on the Use of AI in Article Writing

As AI makes inroads into the worlds of editorial and media, websites are scrambling to establish ground rules for its usage. This week, Wikipedia banned the use of AI-generated text by its editors — although it stopped short of banning AI outright from the site’s editorial processes.

In a recent policy change, the site now states that “the use of LLMs to generate or rewrite article content is prohibited.” This new language updates and clarifies previous, vaguer language that stated that LLMs “should not be used to generate new Wikipedia articles from scratch.”

AI in Wikipedia articles has become a contentious issue among the site’s sprawling, volunteer-driven community of editors. 404 Media reports that the new policy, which was put to a vote by the site’s editors, garnered majority support — 40 to 2.

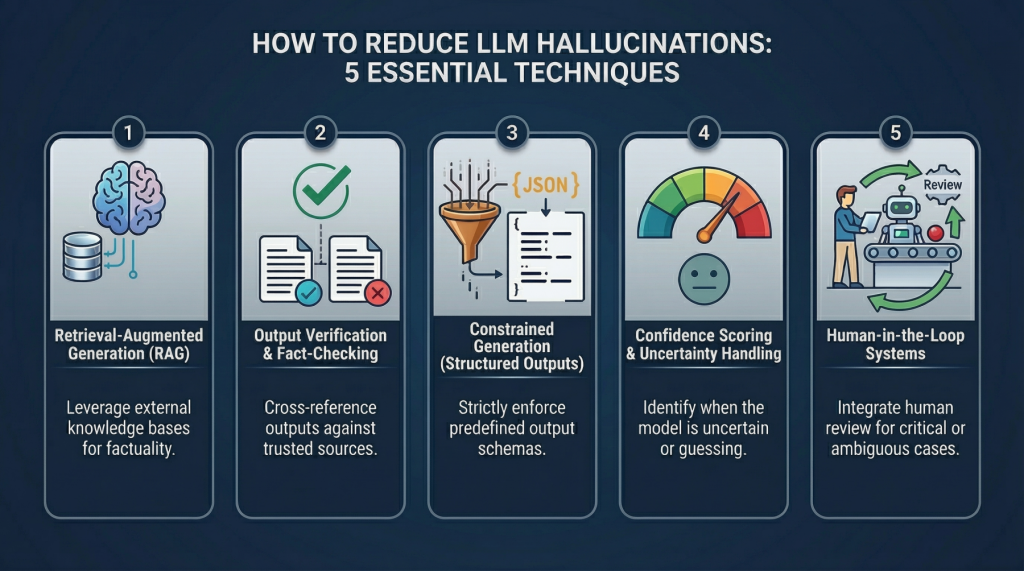

5 Practical Techniques to Detect and Mitigate LLM Hallucinations Beyond Prompt Engineering

In this article, you will learn why large language model hallucinations happen and how to reduce them using system-level techniques that go beyond prompt engineering.

Topics we will cover include:

- What causes hallucinations in large language models.

- Five practical techniques for detecting and mitigating hallucinated outputs in production systems.

- How to implement these techniques with simple examples and realistic design patterns.

Let’s get to it.

Mistral Releases a New Open Source Model for Speech Generation

French AI company Mistral released a new open source text-to-speech model on Thursday that can be used by voice AI assistants or in enterprise use cases like customer support. The model, which lets enterprises build voice agents for sales and customer engagement, puts Mistral in direct competition with the likes of ElevenLabs, Deepgram, and OpenAI.

The new model, called Voxtral TTS, supports nine languages, including English, French, German, Spanish, Dutch, Portuguese, Italian, Hindi, and Arabic.

🚀 Angular

Implementing the Official Angular Claude Skills

In the previous article on Angular.love, we covered the foundational mechanics of Claude Skills and how they prevent language models from polluting modern codebases with legacy patterns. Left to its own devices, an LLM will confidently generate an Angular 8 component complete with constructor injection and a dedicated module file. We solved this previously by writing our own skills or relying on community packages.

Recently, the Angular core team merged the official angular-developer skill directly into the main framework repository. I will break down how this official skill is architected, why it utilizes an orchestrator pattern rather than a modular approach, and how we integrate it into a standard development workflow.

Angular Schematics Deep Dive — Part 2 – ng generate

In Part 1 we covered the architecture of Angular Schematics — the Tree, Rule, Source, SchematicContext, and the execution pipeline. Now it’s time to build something real.

We are going to keep this focused. By the end of this article you will have written a schematic that generates a single Angular component with your team’s custom selector prefix baked in — no configuration, no remembering the --prefix flag, no inconsistency across the codebase.

Your AI is Coding Angular Wrong | Here's Why

If you are using AI to write Angular code in 2026, you are probably doing it wrong. 🚫

LLMs like Gemini 3 and Claude Opus are amazing, but they are trained on old data. They don't know about Angular 21, the new Angular Aria accessibility primitives, or how Tailwind CSS 4 actually works. They hallucinate, they use old syntax, and they break your build.

In this video, I’m showing you the Angular CLI MCP Server. This is the missing link that connects your AI directly to the latest documentation and best practices. We are going to set this up inside Google Antigravity (the next-gen IDE) and watch how it transforms a broken, hallucinated mess into a perfect, production-ready app using Signals and OnPush change detection. 🚀

In this tutorial, we cover:

- Why generic AI models fail with modern frameworks.

- How to configure the MCP (Model Context Protocol) Server.

- A side-by-side comparison: AI "guessing" vs. AI with Documentation Context.

- Building a fully accessible Autocomplete component from scratch.

Upcoming Events

- NG Belgrade Conf 2026: May 7, 2026 - Balkans

- IJS Conference: June 2-3, 2026 - San Diego, United States

- Angular Day: will be held in 2026, Get notified yourself!

🌐 Web & Frontend

Build a Smart Financial Assistant with LlamaParse and Gemini 3.1

Extracting text from unstructured documents is a classic developer headache. For decades, traditional Optical Character Recognition (OCR) systems have struggled with complex layouts, often turning multi-column PDFs, embedded images, and nested tables into an unreadable mess of plain text.

Today, the multimodal capabilities of large language models (LLMs) finally make reliable document understanding possible. LlamaParse bridges the gap between traditional OCR and vision-language agentic parsing. It delivers state-of-the-art text extraction across PDFs, presentations, and images.

In this post, you will learn how to use Gemini to power LlamaParse, extract high-quality text and tables from unstructured documents, and build an intelligent personal finance assistant. As a reminder, Gemini models may make mistakes and should not be relied upon for professional advice.

My Next.js 16 Full Stack Crash Course (React 19 & Prisma)

Ready to master full-stack web development? In this complete Next.js crash course, you will learn how to build and deploy a production-ready application from scratch.

We are moving past basic React and diving deep into Next.js 16, building a real-world "Agent Skills Manager" app. You'll learn essential enterprise-level concepts like App Router, file-based routing, and the differences between SSG, SSR, ISR, and CSR. By the end of this video, you will have a fully functional, authenticated app deployed live on Vercel.

What you will learn & use:

- Next.js 16 & React 19 Fundamentals

- Prisma ORM & PostgreSQL for Database Management

- Tailwind CSS & DaisyUI for rapid styling

- Persistent Authentication with Cookies

- Deploying to Vercel for Production

Jump to Play: Building with Gemini & MediaPipe

Vibe-coding with Gemini makes it easier than ever to build highly interactive games and apps, leveraging the power of MediaPipe to unlock real-time input control. MediaPipe provides cross-platform, off-the-shelf ML solutions for vision, audio, and language optimized for real-time on-device performance.

To illustrate what you can build with MediaPipe, we are introducing a new showcase gallery in Google AI Studio. Recently updated with the Antigravity agent, Google AI Studio is the perfect tool for quickly going from a "what if" idea to a polished, playable experience.

In this blog, we will share fun and simple ways to build apps that interact with the physical world by combining Gemini intelligence with MediaPipe’s real-time sensing capabilities.

Upcoming Events

- Smashing Conference: April 13–16, 2026 - Amsterdam

- FITC Conference: April 27 - 28, 2026 - Toronto, Canada

- Google I/O 2026: May 19-20, 2026 - Live Virtual Event

- Frontend Nation: June 3-4, 2026 - Free Online Live Event

💡 Bottom Line Up Front

This week's digest brings together a broad range of developments spanning AI policy, open-source tooling, frontend frameworks, and developer education. On the AI front, Google has introduced switching tools that allow users to migrate chat histories and personal data from competing chatbots directly into Gemini, while Wikipedia has formalized a ban on LLM-generated article content following an editor vote.

Mistral has entered the voice AI space with its new open-source text-to-speech model, Voxtral TTS, supporting nine languages and targeting enterprise use cases. On the development side, the Angular core team has merged an official Claude Skills integration into the framework repository, and several in-depth resources cover everything from Angular Schematics to full-stack Next.js 16 development with React 19 and Prisma.

I hope you find these helpful. If you do, please share our blog with others so they can join our amazing community on Discord (link below)

Don't miss out – join our awesome community to grow as a tech enthusiast, and to help others grow.

As always, Happy coding ❤️

Sponsor Us:

Promote your product to a focused audience of 4500+ senior engineers and engineering leaders.

Our newsletter delivers consistent visibility among professionals who influence technical direction and purchasing decisions.

Availability is limited. Secure your sponsorship by contacting ahsan.ubitian@gmail.com